#254: Where Will The AI Tool Upgrade Take Us?

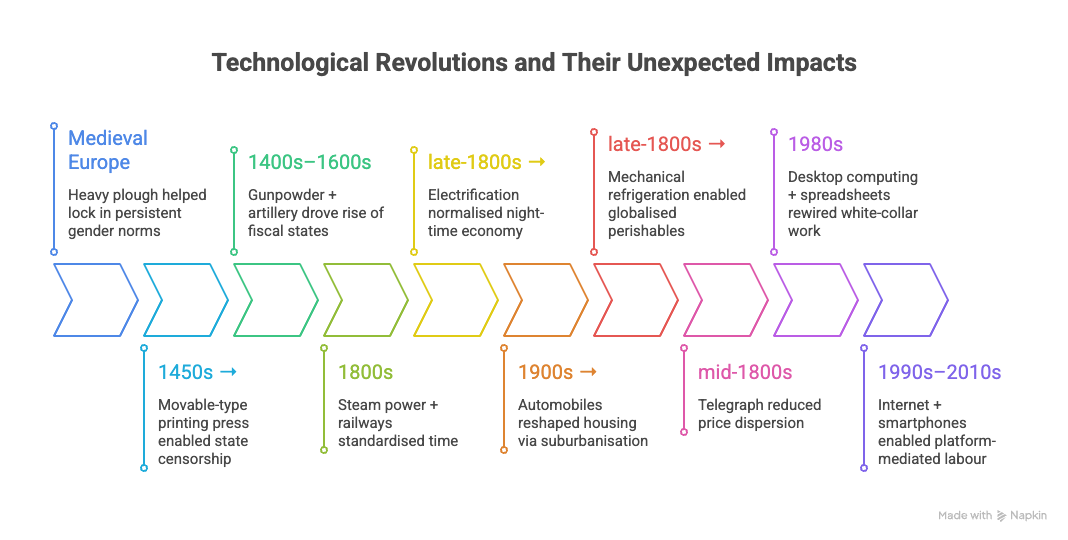

Every historical significant tool upgrade has yielded massive social, economic, political and structural change, which has been unpredictable and subject to the butterfly effect.

It has been said that AI is the biggest tool upgrade we’ve had for centuries. It’s as big as electricity or the printing press. Why does this matter and what should we expect?

Tool Upgrades Through History

To set the context, here’s a quick run-through of some of the big tool upgrades in history: points where there was a fundamental shift of how work got done, and a look at it’s wider and often unexpected impact.

The Plough (7500-5000 BCE)

The plough changed how agriculture was done. But because at the time, agriculture was the dominant form of labour and work, it effectively changed the economics and the structure of societies.

Heavy ploughing meant coordination, hiring of labour, and multiple oxen, setting up a market for skills and oxen instantly. That meant coordination of schedules, sharing or renting of resources, and village level organisation.

It also led to specialisation and hierarchy through the creation of surplus. Non-farm roles grew which allowed for the formation of armies, and the creation of trading and urban centres. It also structured work around calendars and crop rotation. Gender norms arose with male biased field labour. The surplus also allowed for taxation potential. Land became a significant asset, with inheritance, legal rights, and renting options. Land disputes became significant, local institutions grew stronger to create more governance and rules. The administrative state ultimately arises from there.

Movable-type printing (1472)

Language was the first point at which an idea could travel from one person’s brain to the others’s in a consistent and reasonably lossless form. Writing allowed that to happen asynchronously. But movable type was when ideas could spread at an unfathomably quick rate.

This was the era of affordable as well as quick replication of ideas. Knowledge went from scarce and local to a mass consumption entity. This led to literacy spikes, widely shared narratives, organisation around big ideas - including religious or political ones. Dissidents and missionaries could both reach wider audiences. Reformation arose out of this milieu. This phase sees the publication of ideas and principles become a necessity for those wishing to stay powerful, as well as those wishing to challenge it. Publishing, mass education, and the early form of organised marketing and public relations at scale are seeded.

Gunpowder (1500s)

This was a significant battlefield power shift. It created state capacity for warfare at a hitherto unknown scale. To defend against this, fortification became a key need even as the creation of arsenals became a necessity.

This allowed the further centralisation of power - as larger and better funded states could afford logistics and standing forces, which depended on taxation and logistics. The specialisation in warfare meant specialisation elsewhere. State procurement, sophistication in armed forces, specialist military trades (chemists), and the military-industrial organisation was born. Which in turn improved logistics, supply chains, transport networks, and administration and records.

Steam power (1700s/1800s)

This brings us to the idea of mechanisation. The factory system is born, as is urban labour. The side effect of this is slums and ghettos, and poor living conditions and the spread of disease. Urbanisation leads to new class structures, consumer markets, and the scaling of mass produced consumer goods.

Labour politics is born because of the growing self organisation of labour. Work becomes scheduled. The industrial shift divides the day. High social cost of factories in terms of pollution or child labour needs regulation.

Wage labour needs management, and supervision. The org structure emerges. Hierarchies are created, ultimately leading to Frederick Taylor’s time and motion measurement and management principle. The operating model arrives.

Electrification (1800s)

Electricity leads to the assembly line, but also to the reorgnanisation of the factory away from the central steam shaft.

The assembly line gives us mass production of consumer goods as never before prices, and mass consumption of a wide array of goods. Whereas electricity also means longer safe operating hours, 24 hour lighting.

Lighting gives us nightlife, it changes gender roles as home production also gets mechanised, through appliances. Ultimately leading to the emergence women’s rights - in society, in politics, and in the workplace.

The industrial revolution, driven by steam and electricity, also creates the colonial state and the global dominance of some powers. It leads to extractive practices, and the homogenisation of language (English, Spanish, French) and cultures (sport, music).

Computers (1900s)

From the microprocessor, to the mass deployment of desktop computing, computers turbocharged white collar work. It accelerated the roles of women in the workplace. And the creation of knowledge work enabled women to take on leadership roles.

The growth of processing power allows for the hyperscaling of financial analysis and information exchange, and the behemoths of the financial world become super powerful.

The moon landing is also really made possible by computers. And women write a lot of the code that makes it possible.

Internet & Smartphones (2000s)

We are mostly familiar with this part of the story. The near zero marginal cost of copying information or data, along with a high performing computer in every pocket.

Context is now universal. Everybody can be tracked, found, located, served, and expect to be contextually dealt with. Identify, community and culture all shift in the context of a networked society. News (especially bad news) spreads instantaneously across the world. Bubbles and echo chambers are born.

Instant self organisation is possible - the Arab spring is one example. Flash mobs are other. Gig working, remote work, the break down of the shift structure, and the fraying of the industrial era all start to become apparent. Privacy becomes tradeable and political.

Also with network technologies, ecosystems become connectable, API driven business models abound. Organisations become technology icebergs.

The Power of the Tool Upgrade

All of this is clear in the rearview mirror but it is disruptive and anxiety causing when it’s happening. The common patterns:

The ramifications of a significant tool upgrade are wide and often amplified like a butterfly effect. The arrival of automobiles took out half of farming work in the US because it was being used for feeding the horses required for transport.

The impact is unpredictable - from gender equity, to political upheaval, to industrial organisation - it’s impossible to predict what and where the impact will be. Automobiles also created the urban phenomenon of downtown and suburbs.

Society usually organises itself around how work is done, how we create and use energy, and what we value as a scarce resource - and with each significant tool upgrade, the relationships between these gets a reboot. Automobiles made motor fuel a valuable commodity, for example.

Here’s a summary view of some of the unexpected changes through tool upgrades:

So what does this mean for the coming tool upgrade with AI?

The AI Tool Upgrade

What might happen over the next AI enabled era as our tools get one of the most significant upgrades in history? By all accounts this is as big as electricity. Much of this we will only know as it plays out. Looking back in 50 years, it will seem obvious, as multiple possible futures collapse into one actual reality. But for now, let’s imagine some of the weird and non-linear changes. Here are just some scenarios to consider:

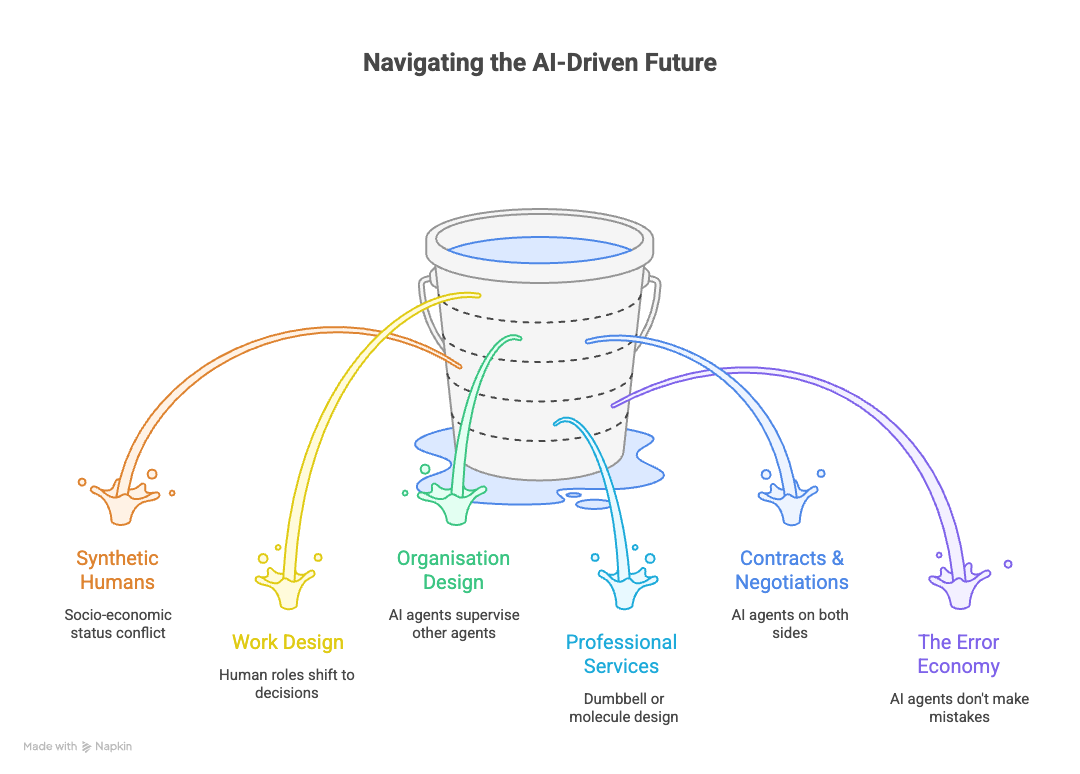

Edge Humans

If you think of a pyramid of socio economic status, with the very rich and powerful at the top, and the socially and economically disenfranchised at the bottom, we might add another layer of synthetic humans right at the end. These will be AI agents but they will have a face, and a voice, and an internet presence. They might even have access to funds. Will they have voting rights? Will there be conflict if these virtual humans start to climb up the pyramid to hold status above real humans? Will the world create more male or female digital humans? Or will gender fluidity become the norm for these virtual humans?

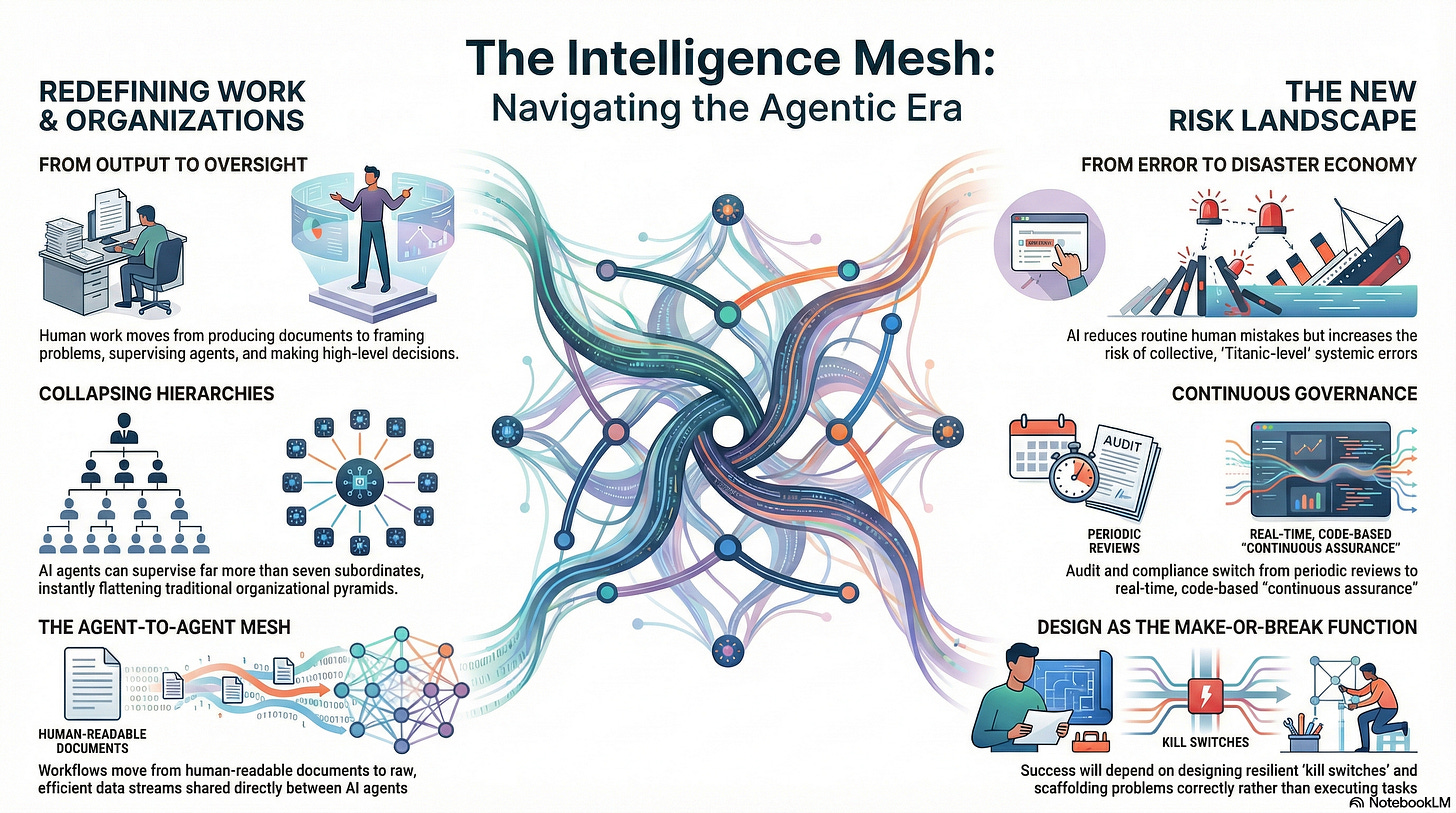

Work Design

A lot of knowledge work involves producing work output - documents, presentations, spreadsheets - all of this seems an obvious area for AI tools to take over. So human work moves to producing decisions, supervision, and governance. Every meeting gets recorded automatically. Note taking will be irrelevant. Information absorption and decision review will be critical. Remembering as a differentiating trait drops. And I can easily see that the focus for humans will squarely be on scaffolding and framing problems. A lot of the output quality depends on how well that scaffolding is done - how we remember to call out the objectives, constraints, conditions, and exceptions. How will all this flow upstream into education?

Organisation Design

We start with the assumption that a lot of work is done by AI agents and humans get to supervise them. But we know today that humans can (or should) manage a maximum of 7 subordinates. How many AI Agents can a human supervise? But by the time you wrap your head around that, we should ask, why can’t agents supervise other agents? And how many agents can an AI agent supervise, presumably a whole lot more than 7, instantly collapsing the organisation. Also, clearly supply of knowledge work will not be the constraint, but rather the demand. What will be the future demand for knowledge work? And how will price be set in a world of abundant supply?

What of Professional Services?

Will the future professional services be shaped like a dumbbell? Clumps pf human capabilities connected via a spine of logic? Or an extended pods and spines network, like a molecule design? Or will it be an intelligent platform governed and run by a few humans but the actual knowledge processing is done by the platform?

How will we learn in this world? If we stop learning by doing, what are the options? We might learn by simulation, as the cost of simulations and game play drops dramatically. Or we learn through teaching, or through accountability instances, or exceptions, or perhaps through the construction of guardrails.

Contracts and Negotiations?

What happens to contracts, lawyers, and negotiation when both sides are using AI agents?

Will information asymmetry disappear? Will we telescope legal processes into multiple offers and counter offers within a single short window of negotiation? Will it become more adversarial? Transactional? Will relationships matter? Will it boil down to information and deal automation and plumbing? Could there be significant collusion or discrimination? For example a buyer and seller agree to a contract that benefits both entities economically but negatively impacts a specific group of people in one or both organisations (a specific gender for example) unless specifically parameterised.

Ultimately will contract performance boil down to who has the better AI or who does better forecasting of the unknown unknowns? Or like a game of chess, will one side find a small misstep and then continuously exploit it for bigger and bigger gains till they declare victory?

The Error Economy:

If you think about it, a huge amount of the professional world is built around the ‘error economy’ - things go wrong, people make mistakes. Hence we have insurance, audit, policing, fraud, and big chunks of management and some parts of healthcare. What happens when AI agents by and large don’t make mistakes? Or when they do, they make a collective and significant titanic error? We go from the error economy to a disaster economy, so rollback and kills switches, business continuity, and resilience become far more critical.

What happens to audit? Does it switch to continuous assurance? We’ve already started having this kind of conversation. Governance becomes code, and real time, built into systems. Self describing, with observability and telemetry built in. We will still need to understand the future of liability, intent, and the role of scepticism.

For objective control of a system we will need to also ask what’s outside the system? What does that even mean when everything is interconnected? And will the focus shift from running to designing as the make or break function?

Baby Steps

These are just baby steps in our thinking. It’s insanely hard to think around the corners of these changes. The second and third order changes which may be significantly amplified in some cases remain out of reach for me so far. I’m going to have to think for a week to imagine some of the ramifications on societal structures, political outcomes and broader anthropological impact. But what we can already see is that maybe AI isn’t just a tool, it might be more than a tool at an individual user level.

Tool or Colleague? Or Perhaps Intelligent Mesh?

What if this is more than a tool upgrade? Or at least a very different thing? What if it’s moving from a tool to an assistant, or to a partner? A colleague?

When I asked ChatGPT 5.2 this question, it gave me some very sage advice. It said:

“tool vs colleague” is mostly a governance choice, not a metaphysical truth. AI feels like a colleague because it talks, remembers context, proposes plans, and can act through tools. But it’s still not a moral agent; it doesn’t hold duties, bear consequences, or carry legal liability. So the pragmatic answer is:

Treat it like a tool for accountability / Design it like a colleague for usability and performance

I was impressed by this.

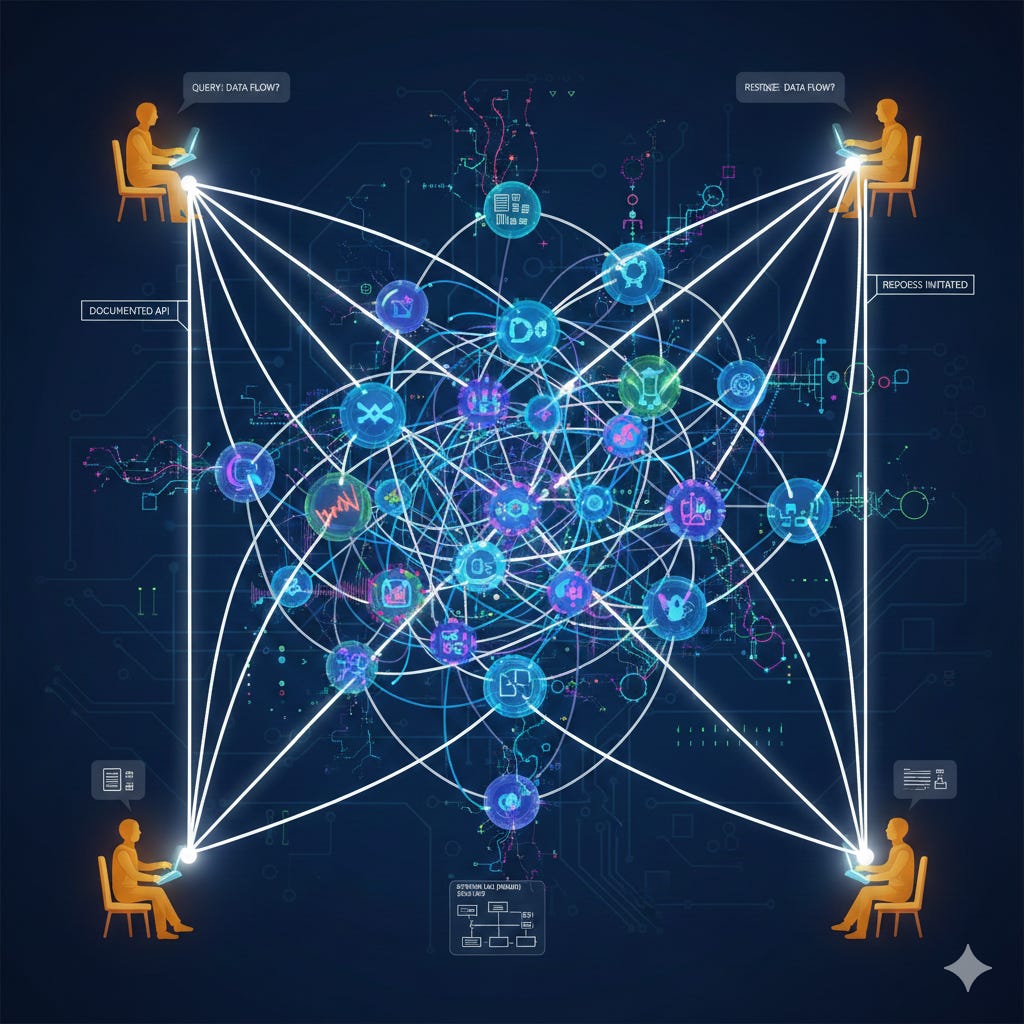

Practically, it makes sense to think about AI not just as a colleague or a tool but also as a connecting intelligence fabric. Let me explain.

Language was the first instance of being able to transfer a coherent, complex idea from one human’s brain to another’s in a reasonably lossless form. But you had to be in direct contact for this to happen.

With writing, and later with printing, it became possible to do this asynchronously, and then at scale. This was quite a big deal. But this is where we are 600 years after the invention of movable type. To get an idea from one human’s thought to another, it needs to be written down, handed over and absorbed.

This is how the bulk of our workflows work. You think of something you put it down in some format - language, pictures, words, symbols, and the next person can read it and extract the meaning, or act upon the information. Think of how requirements are captured (document), architectural plans are drawn up (document), legal agreements are signed (still a document).

Now imagine that 2 nodes of a workflow are both AI agents of some description. Why do these agents need documents? They can exchange the most efficient form of raw data, and decipher the meaning. We keep hearing about how AI agents created a new language. Actually that’s just 2 entities figuring out the most efficient way of communicating meaning.

Couples evolve signals, codes, words, and phrases that only make sense to them. They do this over years, without any explicit plan or model. It just happens through shared context, and an inherent need for efficiency. You could write a little handbook of all the shorthands my wife and I use on a weekly basis.

AI agents sometimes just do this at speed. They quickly evolve to a model of shared meaning that allows them to efficiently share information. We could call it a new language.

So back to our workflows. Now you have 2 agents who don’t need to create and re-interpret documents. So we start to redefine workflows into efficient data streams. Humans also create documents for ensuring auditability and record keeping. Both of which can be done with the data stream.

Which is why the agentic AI workflows may not look like digital versions of the human flows. They are not simply the same tasks done with better tools. They are a reconfiguration of resources to get a complex, multi-nodal activity done in the most efficient manner possible.

So the way 2 agents create an architectural view of a system, a goods manifest, or a design of a building, may not look anything like a human readable view. It may just be a stream of data that makes perfect sense to the agents.

That still leaves the challenge of the agent-human interface, so at any time the system needs to be able to convert this into a human readable format. This will be required for any stage of the workflow where for any reason a human input or approval is essential. Amongst other things, this will act as a safety valve to ensure that a human can step in at any point to see the status of a piece of work or a flow.

So at least one alternative way of thinking about AI is not a tool or assistant that shadows the human work, but an intelligent mesh that transfers ideas and executes workflows at speed using it’s preferred language and data streams. There’s a whole book broadly on this theme by Sangeet Paul Choudhary

The Coming Credentials Convergence

One very specific area of early impact seems to be rooted in the ability of AI systems to create very high calibre documents - from papers, to presentations, to blog posts, and resumes.

Think about is how the base expectations - the so called “hygiene level” - for documents is changing. For instance, in the world of Grammarly and ubiquitous spell checks and grammar checks, you could argue that leaving basic spelling or grammatical errors reeks of amateurism. Why would you not run a basic check?

(I should mention here that it has been pointed out to me that in my daily posts, I sometimes have typos, but I would not rush through a formal document with the unfiltered stream of consciousness I sometimes use for my posts).

It’s not just perfect documents though, it’s perfect emails, and well organised spreadsheets, and professional grade slides. With tools like Beautiful.ai, or Notebook.lm - you can produce very high grade slides in minutes. So as a presenter too, having high quality slides is becoming a bare minimum. We’re back to the human presenter being the difference maker in a great presentation.

We have been interviewing for AI engineer and architect roles of late. And one of the issues we’ve uncovered, is that everybody has amazing CVs. The resumes are flawless and apparently perfectly suited for the roles we’re looking for. Only problem: they don’t necessarily bear any relation to the reality of the candidates’ skills. “What’s a RAG?”, “How does a RAG differ from a reasoning model?” - just some initial questions that seemed to stump people who on paper were AI practitioners!

So here we hit core of our problem. We have historically considered the quality a professional artefact as a surrogate for the quality of the person behind it. The writer, the wordsmith, the designer, the thinker, the analyst - in fact our primary way of judging them has been to evaluate the quality of their production. Suddenly, that is no longer relevant. Everybody has excellent output. So now you have to actually see them perform their work, to truly judge them. Welcome to the world of performative evaluation.

It’s a bit analogous to the music industry, where thanks to technology (digitisation, copying, and distribution/streaming), the recorded content has gotten financially devalued, so musicians have had to drive revenues through performances, rather than recorded work. In much the same way AI seems to be pushing the evaluation of the work of white collar work to the performance rather than the output. The effective (and differentiated) use of AI tools may in fact become a part of the performance, in future. You will be asked in an interview to write a prompt to produce a high calibre output under the watchful eyes of a jury of evaluators.

There is a secondary problem - which is the hypothesis that AI drives a level of homogeneity in the output. Over time, everybody’s CV doesn’t just look great, but they all start looking like each other because they’ve all been put through similar AI tools to make them suitable for a particular job. Or documents, presentations, and all other knowledge artefacts also start to look and feel similar and undifferentiated as human diversity gives way to AI’s shared probability functions.

But this may be a human problem caused by all humans trying to compete for the same yardsticks in the same way. It’s the humans prompting the AI to produce something that meets a universally accepted common benchmark. To solve that problem, humans will need to exercise their differences and learn to ask the AI a wider set of questions and build individuality into their prompting.

Maybe at that point, we will start to once again see the differences in the quality of the output itself. But we may never go back to wholly evaluating people’s capabilities based on the artefacts they generate. That ship, I feel, has sailed like the Titanic, if you will permit me to mangle my metaphors! Would an AI do that?

Other Reading

Robotics for elderly social care - enabling older people to stay at home longer. (NYT)

Governance as code - the idea of Bayesian audits, based on probability and baked into your systems - this is one of the better articulations of the future of AI governance. (Substack Francesco Orsi)

AI Bullying Humans? What happened when engineer Scott Shambaugh refused a code commit from an AI bot and it decided to write derogatory public posts about him. (WSJ)

Ineffable AI Start Up: Former Deep Mind scientist David Silver has set up Ineffable AI - to build next generation AI through reinforcement learning. Funded by Sequoia, amongst others, and valued at $4bn pre-money. (FT)

Superintelligence - Go Now/ Go Never: In a recent paper, Nick Bostrom weighs up the cost of waiting for the right governance (lives lost), versus the risk of AI driven annihilation (pDoom), when it comes to superintelligence. (Nick Bostrom)