As we reach the end of the first month of 2024, I thought I’d take a look at where we’ve gotten to with AI, given the winds (storms?) of change constantly blowing through the industry. Some signals can also be extracted from all the noise at Davos.

Let’s first consider the big picture of AI and the risk of apocalypse:

AI Apocalypse —> AI Safety

While it’s fashionable and meme inducing to speak about the AI Apocalypse and the end of jobs or even the end of humanity, it’s probably more important to speak about controlling AI harms in the short term. Even if there was an apocalypse, it would probably be a cumulation of unchecked harms. Sam Altman made the point at Davos that safety is not binary. There is no one measure of safety or when something is safe enough. We need to break up the big fear into a few smaller areas of concern and manage them. Here are a couple:

Fake news and deep fakes are likely to proliferate in such a election heavy year. We’ve already had a practice run with the recent Taylor Swift spike. The cat and mouse game of tracking fakes is under way, and the jury is out on whether we can actually identify fakes. On the other hand this is creating a market for trust, and I’ve wondered for a while whether AI can be used to create a truth engine - a Snope on steroids.

Growing inequality: in the short term, AI is likely to exacerbate inequality. The big 7 tech firms have apparently added a collective $4.6 tn of market value since Chat GPT was released. Although there is a legitimate correlation vs causation question there. But could AI reduce long term inequality and boot strap the developing world in the future?

Whither AGI?

This one too, is a bit fuzzy. By way of analogy, you might say that next December, you would like to go to Sri Lanka. That’s fine, but at some point when it gets to September and October, you’ll need to be more specific about where in Sri Lanka you want to go and what your itinerary might be.

AGI has been the long term goal for a while. But if we’re getting anywhere close to it, as some people seem to believe, we’re going to need a lot more specificity about what exactly constitutes AGI (and what does not). Maybe it’s time we had that conversation.

Job-Task Reconfiguration

AI automates tasks, not jobs. Jobs are composites of hundreds of individual tasks. Your job might be as a sales person. But planning your itinerary and your calendar, or updating your CRM are some of the many tasks you need to perform. AI can’t take over your sales job but it could automate a chunk of your tasks. This has many implications.

First this will lead to a reconfiguration of tasks and jobs. Today’s jobs are particular clusters of tasks because that’s how they work based for humans with varying skill capabilities. With AI in the picture and picking up some of these tasks, the task configuration of a jobs will vary. Consequently the human jobs will also see a reconfiguration of tasks to be done, with some tasks dropping out altogether.

AI’s superpower is actually automating decision making. So the focus going forward will probably be on key decision points in the delivery of services and knowledge work. Especially those decisions which are driven by data and for which data is available to us or to the AI tool. While we might start with basic automation of ‘doing work’ such as booking a restaurant or calling a particular phone number, the future intelligent automation might help us to choose a restaurant given a configuration of people, or select a menu.

What About Jobs Lost?

This is a contentious topic with many strong views. In my personal opinion, jobs will be lost in two different ways:

First, a we should expect a change in the number of people required to do a job for any given organisation. Which means for jobs which are currently done by teams of people we should expect a reduction in the sizes of the teams. This is basic maths. If 50 people perform a call centre job in a company, but now 40% of the job can be done by an AI tool, about 30 people will now be required to perform the remaining 60% of the jobs. Or if the AI tool cuts your time to execute each unit of work by 50%, you will need half the people for the same amount of work. This is the productivity based job loss.

The other kind is that a particular job just ceases to exist because that job has been completely reconfigured for a machine to execute. Switchboard operators, and some production line workers are examples of people impacted. This is not a productivity improvement, but rather a factor substitution of labour with technology (capital). Imagine a factory production line with a thousand people. A new robotic automation replaces almost all the people leaving 10 people in quality and adding another 40 in tech roles to manage and run the robots and software. A pure productivity arithmetic would suggest that 50 people are now doing the work of 1000, so there should be a 20x jump in productivity. Of course, this is spurious.

A third way in which technology causes job erosion is through the democratization of expertise. A smartphone allows you and me to take a high quality photograph that would have required a professional photographer’s skills. Google maps allows us to have the same knowledge of London streets that a black cab driver takes 3-5 years to master formally. This doesn’t cause job loss but it puts downward pressure on the price of expertise.

We should anticipate significant impact of both kinds of job loss over the next decade. Although there is a lot of sugar-coating of this impact currently with the tech industry.

In fact, I’ve argued before that the idea that ‘you won’t lose your job to AI but you might lose your job to a person using AI’ is a red herring. It’s like saying if you are a horse carriage driver, you won’t lose your job to the automobile, but you will lose it to somebody driving an automobile. It’s a dressed up argument. Or that the luddites didn’t lose their job to a textile machine, but to people who operated the textile machine. By the way, this kind of intelligent automation expands the market as well, by bringing down the unit cost of the service, and creating new jobs. (think portrait vs photo), but there’s definitely a short term job impact.

In short the relationship between AI and the future of work is complex, with many distinct impact areas and causalities.

Beyond Job Loss

Just to remind ourselves, there are many categories of work that AI can potentially do which don’t involve a job loss. A year ago I wrote this list of jobs which can be done by machines or AI which have positive impact on human jobs. Here’s the list:

(1) Jobs that humans can't do, or have almost always been done by machines: e.g. designing and placing millions of circuits on micro-transistors, space probes, indexing the whole internet.

(2) Dangerous jobs -with exposure to radiation, disease, or explosives: e.g. autonomous mine-sweeping robots

(3) Jobs that we apparently don't have enough people to do: truck driving, care work, fruit picking (in the UK).

(4) Jobs we do badly: lifting heavy loads, forecasting, or driving at night.

(5) Jobs which are increasingly too low value for people to do economically - e.g. dog-sitting or lawn-mowing

(6) Jobs which are low end components of high end jobs - e.g. paperwork done by a brain surgeon.

Remember though, that this is exactly the pattern described by Clay Christensen - we vacate these jobs and then the tech grows better and better and takes the jobs we do well or don’t want to give up. We know even creative jobs aren't safe, thanks to Generative AI.

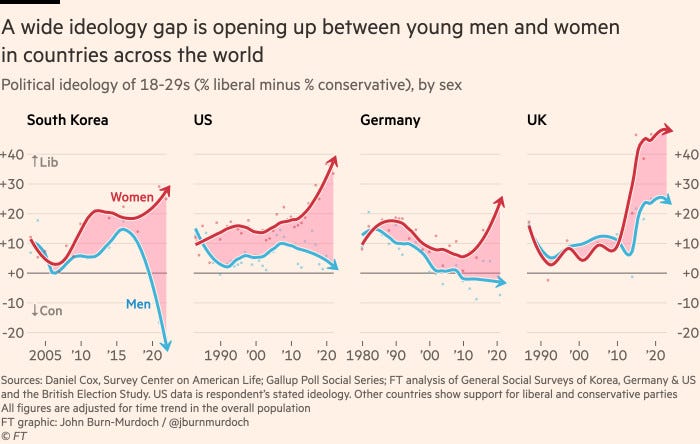

This Chart Is Breaking the Internet

Ok, to be more honest, let’s say many smart people across the world are pondering the causes and implications of this data, which suggests that a significant gap is opening up between young men and women in how liberal vs conservative they are. Carl Benedikt Frey suggests it might be a variance in the social media consumption that causes this. Here’s the original FT article.

AI Reading

AI & Trust: The obsolescence of trust is one of the big potential impacts of AI (Medium)

Apple Gen AI: Apple unveils Ferret.

AI Regulation: regulating AI in medicine.

Elon Musk Gen AI: yes, he’s interested and raising funds for an AI play

Other Reading This Week

Innovator: Nicholas Saunders - the forgotten man behind Neals Yard Dairy and Monmouth Coffee (if you’re a Londoner you will probably know and love these names), was a counter culture propagator and serial innovator. Here’s a lovely tribute to his life and work and his influence on British food and culture. (Guardian)

Aviation: Boeing’s troubles are well documented now, with loose bolts and planes grounded. But it’s a curious industry dominated by a duopoly, with the complexity of building planes requiring government support and backing. All of which is particularly interesting given the role of aviation in our world. Is it ripe for disruption? (Quartz)

Netflix: Has Netflix won the streaming wars? (FT)

Creativity: Unintended interruptions can boost creativity (HBR)

Battery Tech: A Cornell team has created a lithium battery that can charge in 5 mins (Fast Company)

Thanks for reading, have a great week!