#215: Neuromorphic Machines - Mimicking The Brain

Bio-mimicry meets the future of computing. It might be the great hope for AI, and will help in getting beyond the limitations of current computing architectures.

Rethinking Computing

One of the fascinating challenges we face is to fundamentally rethink the concept of something like a computer. When you think computer you’re probably instantly thinking of memory, processors, screen, keyboard, etc. But what if we started with a completely different set of assumptions about architectures, interfaces, and functioning? Would it even be a computer? For example when you think of TV you think of a device, a broadcast mechanism, a type of content, and perhaps a kind of viewing experience. Now if you watch a recorded and streamed football game on your gaming device with a data stream alongside it, is it still television you’re watching? It’s not a TV set, and it’s not a ‘broadcast’, and you’re not watching it in your living room with your family. Is this not television then? And if not, at what point did it stop becoming television? The same thing might be happening to computing, as we change assumptions and features one by one.

First, let’s talk about biomimicry:

Nature is an incredible engineer and designer and examples abound of natural engineering that we would struggle to match, or that we’ve taken inspiration from. Hence the term biomimicry. For example the speed at which a woodpecker hits the tree with its beak should smash its skull, but it doesn’t. And so we design crash helmets based on the woodpecker skull design.

We have loads of examples - velcro was designed to replicate burrs that stick on dogs’ fur. Robots that mimic gecko suction pads to climb walls. Wind turbines designed in the shape of humpback whales. Swimsuits designed like sharkskin that could reduce drag (although probably not these ones). Bullet trains inspired by the kingfisher beak. Cooling systems that borrow from termite mounds. The list goes on.

So What’s Neuromorphic Computing?

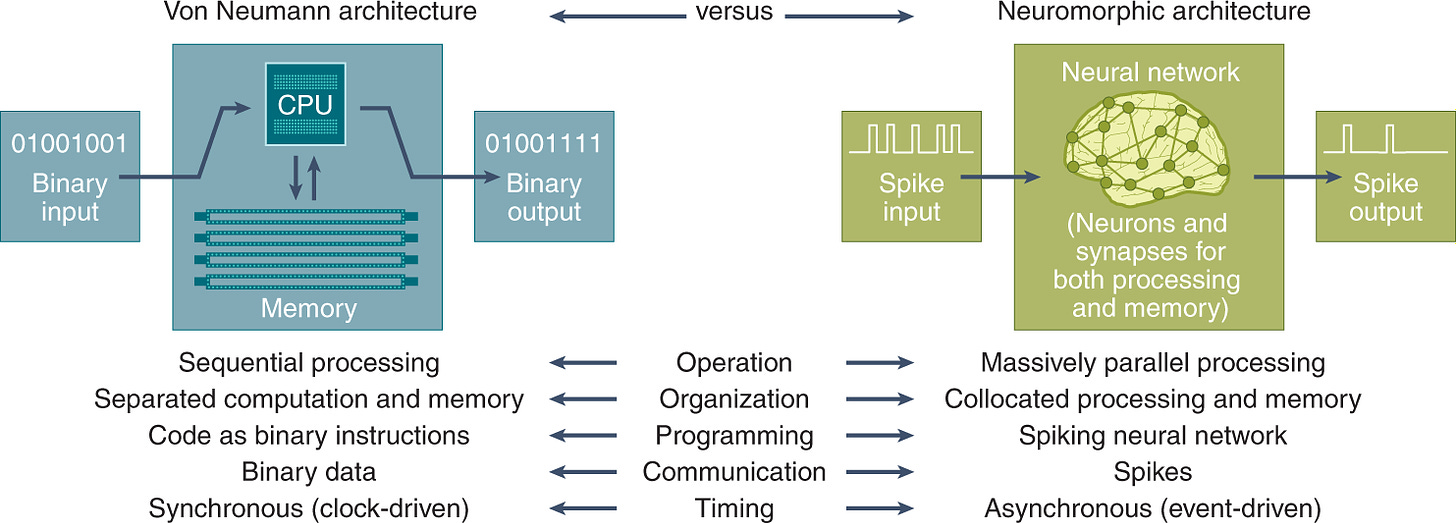

Neuromorphic computing is yet another ambitious example of biomimicry. It seeks to replace the traditional architecture of computing with something that borrows from the engineering of the human brain. Traditional computing is largely based on the Von Neumann architecture, which involves the separation of processing and memory. The processing itself is driven by electrical circuits on a silicon wafer. As you well know Moore’s law, which predicts the rate of increase of density of circuits on the silicon, has held sway for decades now, and it is also believed that traditional computing might be approaching the limits of Moore’s law. There’s a problem of speed in ferrying data from storage to processing. And there’s the challenge of heat dissipation when you get more and more circuits on a wafer. The only way to get past this is to change the fundamental architecture of computing. Neuromorphic computing is one such way. (Quantum computing is another).

So what’s different about neuromorphic computing? Well in short, almost everything. For example traditional computers are fundemantally sequential in their working, depending on traditional mathematics. The human brain works in much more fuzzy ways, achieves vast amounts of parallel and adaptive processing and has learning built into the architecture.

Traditional computers use transistors as a bedrock to perform millions of calculations. Neuromorphic computers use artificial neurons made from various technologies like memristors (memory resistors that connect magnetic flux and charge) or spintronics (using the spinning motion of electrons), mimicking biological neurons and synapses. So the building blocks themselves are different. As a result, neuromorphic machines don’t use bits and bytes at all, nor zeros and ones. Information is processed through analog signals, just like the neurons in your brain, using electrical impulses. The way your brain uses ‘action potential’ signals that go from neuron to neuron from a part of your body to your brain. Each neuron talks to many others so this travels fast and in fault-tolerant ways. Neuromorphic computers use spikes (analog and digital) to represent the neuron signal and an active area or research is how to make these spikes work.

Yet another difference is that learning mechanisms in traditional computing have been something of a retrofit, through programming and algorithmic structures, that need human design. Conversely, learning is built into the architecture of neuromorphic machines - which learn by adjusting connections between artificial neurons based on experience, potentially leading to autonomous learning. Remember each neuron can store as well as transmit information.

A last and extremely important aspect is their energy consumption. The human brain is a magnificently designed processing environment that runs on 20 watts of power. A traditional computer with the processing power of the human brain would be the size of a football field, and consume power equivalent to a similar sized urban block. The Fugaku super computer takes 28 MW (1.4 million times more). Neuromorphic computers also aim to mimic the efficient energy usage of the brain.

Are they Real?

Neuromorphic computers exist, although it would be fair to say that they are early versions, and scale is still a challenge. Examples of neuromorphic hardware include TrueNorth (IBM), Loihi (Intel), and blended models like Akida (BrainChip). These hardware systems have been used to demonstrate tasks like image recognition, pattern classification, and robotics control, showing their potential capabilities.

On the other hand, we are still to see full-scale development with the billions of neurons - the hardware complexity and power consumption challenges are yet to be solved at scale. There is also very little by way of programming techniques or algorithms that utilize the abilities of the hardware design. Many aspects are still being researched. My colleagues at TCS are researching spike encoding for gesture recognition applications, for example.

Why does it matter?

We've reached a point where the tasks computers are doing are complex in multi-dimensional ways. It’s no longer about performing more number crunching in the traditional way. It increasingly involves parallel processing, and learning behaviours. A useful analogy is phone cameras - its not good enough to just add megapixels, the cameras also have to get smarter. So while traditional computers excel at general-purpose tasks like calculations and simulations, neuromorphic computers (when they are used at scale) will excel at applications such as real-time pattern recognition, robotics control, and brain-computer interfaces.

These are early days but it’s likely that neuromorphic will become quite ubiquitous especially where power and bandwidth are limited - such as satellite image processing. But more than anything else neuromorphic computers may provide the biggest impetus for next generation AI since GPUs.

Other Reading

Product Innovation - Aeropress: The story of the aeropress coffee machine and how going against the grain of received wisdom sometimes works for a new idea or product. (FT)

AI for History: the story of how a contest helped AI researchers to decipher ancient Roman and Greek scrolls which were discovered in Herculaneum which was burnt in the volcanic eruption of Pompei in 79 AD. Human pattern recognition also plays a part in this story. (Business Week)

UI for AI - Could Apple have worked it out? Joshua Gans argues that Apple is likely to get the user experience of AI right, and that Apple have a track record of introducing or rather ‘seeding’ new categories. This also reflects my belief that significant adoption happens not when new tech is invented but when the user experience is evolved for mass appeal.

Google Gemini: Prof Ethan Mollik’s excellent analysis on Gemini’s strengths is an excellent primer on how best to use Gemini if you’re starting out. Note: additional reporting for this piece was done by Gemini.

thanks for reading and see you soon.