199: Intelligence: Beyond 'Human'

We need to stop using 'human' as a reference for intelligence of AI systems.

Hello!

When the word Artificial Intelligence was coined, the idea that a machine (or a piece of software) might one day achieve human level intelligence was as far-fetched as a flight to Mars, or a 200-year lifespan. Yet, all of those have now entered the realm of possibility, thanks to the ever-accelerating pace of technological evolution. Specifically though, AI as an area has reached the point of viability, and is forcing us to ponder human intelligence and creativity more objectively. Right from its naming, the preeminent thesis of AI is that human intelligence is an unscalable pinnacle, a north star intended for guidance, rather than a real destination.

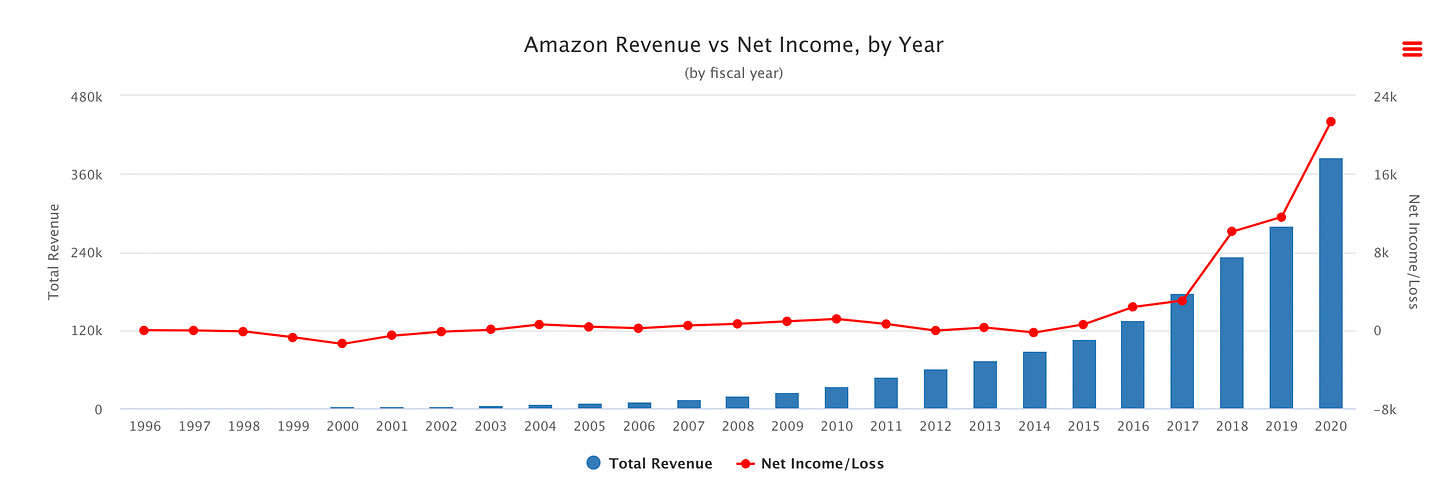

It's true that evolution has given us incredible intellectual firepower, but the most significant variable at play here is not the level of intelligence, but the acceleration. Most of us now realise the very real impact of exponential variables, thanks to the pandemic. What seems like an insignificant disease in a town in China one day can take over the world in a year's time. Or perhaps consider the net revenues of Walmart and Amazon in 2001 and in 2022.

In much the same way we need to look at AI which has been around for about 50 years now (about 30 if you discount the period up to the end of the AI winter). Compared to the roughly 3.7 billion years of the evolutionary process (or 300,000 years if you consider only Homo Sapiens evolution) The very fact that a comparison of any kind is debate worthy should serve to tell us that in another 50 years, this may not be a debate at all. This is rather well covered here.

Also, the evolutionary process as we know is not a goal oriented one. It's a series of 'short term' responses to stimuli that shapes species over generations. Creationists might argue otherwise, but there is no grand design or reference architecture for human intelligence - it has haphazardly evolved to this point. Consequently it has many built in features which can act both as enablers and flaws. Cognitive biases are among the most obvious ones - the same short-cuts that allow us to get through a vast number of daily decisions quickly, that would otherwise paralyse us, lead to errors of judgement in many complex evaluations. Here's an excellent book to understand the limitations of the evolutionary process. Human society - our jobs, lives, homes, and cars have all been evolved around human needs and intelligence. And similarly, there are many design flaws baked in which we just live with. Why are there blind-spots in cars after a hundred years of driving? Why do we still contract to work 8-hour shifts like in factories? Why does a message to my colleague sitting 2 desks away from me have to be routed through a server in California? These are all evolutionary bugs that we treat as features.

The use of human intelligence as a reference for AI therefore is specious and misleading. It does no favours to a meaningful appreciation of AI, and it sets an imprecise direction for its evolution. After all, if evolution had worked slightly differently millions of years ago, it might have been the octopus (aka Octopus Vulgaris) rather than the Homo Sapiens that was the pre-eminent intelligent life form on the planet today! Here's a good book about the octopus mind.

Just to be clear - 'human intelligence' is not a clear goal, and lacks metrics. For example, if these two circles represent the typical intelligence range from average to high, for humans…

… then an individual person's intelligence might look like this:

In other words, a scientist who can solve complex mathematical problems might struggle to drive a car or remember a grocery list, or manage his or her finances. So when we say human intelligence, do we mean an ability to do grocery shopping? Calculate, remember, and file taxes? Or solve mathematical problems that have puzzled scientists for centuries? As you can see these are all vastly different types and levels of intelligence.

Which is why we need a much more objective view of intelligence rather than simply one that is pegged to humans.

After all, a typical (narrow) AI might look a little bit like this:

It can do a very specific activity very well, in fact, far better than any human could aspire to, without necessarily being able to do anything else at anywhere close to human level. Language models of generative AI are exactly this, which is why they often struggle with elementary maths.

This also ties intelligence to the task and context. This might seem restrictive but in reality humans are exactly like this. We are all good at specific things. But given that human performance varies, we should stop using it as a metric. Perhaps it's a crude benchmark when seen as a range of capability. So in the example above the AI has crossed a range over which a typical human might operate. In order to evaluate AI therefore, we need calibration well beyond the human range, but more importantly, we need a framework that does not use human intelligence as a basis.

Such a calibration or framework for intelligence would still need to deliver incremental benefits for a world designed for and around human intelligence and behaviour. For example, we would like our cars to be faster and safer, on our current roads, rather than rethink transport systems from scratch. A very simple starting point for this could potentially be built around 2 key rules.

First, that it delivers a faster and/or improved decision making for a specific task. The calibration will follow based on the currently available best options - human or machine driven.

And second, a principle of pareto-superiority - that is, it doesn't make anything else worse. This allows various forms of 'narrow intelligence' to flourish without necessarily tying it in to Artificial General Intelligence or the human model.

After all, to err is human and that is not very useful as a metric, is it?

Reading List

Healthcare: Is NHS Broken? - this is one of a two part comprehensive view of the challenges facing NHS. And here’s the second part. (FT)

Healthy Ageing: This piece explores the 60 year career, as people live and work longer. And this one asks why more tech isn’t used to address loneliness for older people. (WSJ)

Generative AI: What’s getting funded (Crunchbase)

Generative AI: The coming Search Wars (Economist)

Society 4.0: Jeff Jarvis suggests that ‘Journalism is Lossy Compression’. (Medium)

Society 4.0: Soshanna Zubov on why Privacy is a Zombie (FT)

AI: The Deepfake Cottage Industry (IEEE Spectrum)

Ethics: The De-extinction of the Dodo - why, not how (Wired)

Innovation: How Microsoft became innovative again (HBR)

Have fun and see you in a couple of weeks.

Ved